Important inventions are often framed as happening by “serendipity,” where a scientist with a prepared mind saw something, or dreamed something, or took LSD, and had this big breakthrough that ended up changing the world. Serendipity literally means “the occurrence and development of events by chance in a happy or beneficial way.” And yet, the more I read about famous inventions in molecular biology, the more I’m struck by how many (not all, but many) of them were deliberate creations with no “chance” involved. Indeed, the more you read about these histories, the more you begin to see a pattern in which great inventors “engineered their own serendipity;” a point that Ed Boyden has written about profusely.

The discovery of GFP, for example, was no accident. After nearly being blinded by the atomic bomb dropped on Nagasaki, Osamu Shimomura moved to the United States to study with Frank Johnson at Princeton. Johnson was already deeply fascinated by fluorescent molecules and knew about the jellyfish swimming off the coast of Friday Harbor, Washington, from which GFP would later be found. And Shimomura himself had already studied sea fireflies (crustaceans that emit light, widespread on the coast of Japan) in Nagasaki, so he came into the GFP project with lots of experience with fluorescence and a desire to isolate the molecules responsible.

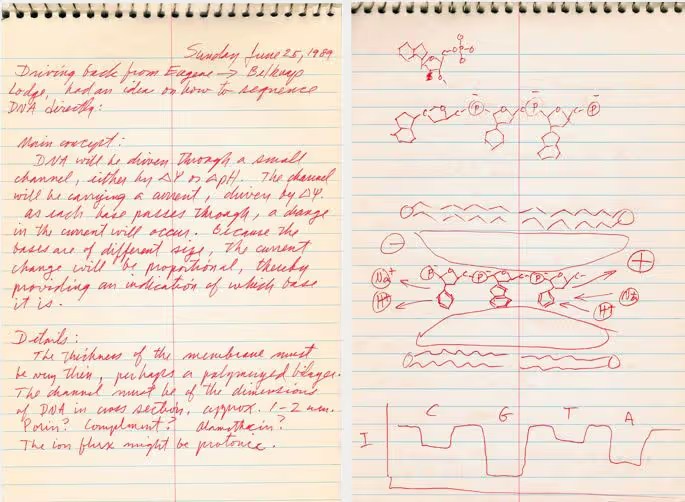

Nanopore sequencers, too, are often described as being invented by “serendipity,” because David Deamer was driving in his car on a California highway when, all of a sudden, he had this bolt of insight where he realized that nucleotides, moving through a protein pore, might be able to disrupt an electrical current in unique ways and thus be deciphered according to their “fingerprints.” Deamer pulled over, sketched out the idea in a notebook, and then spent the next seven years building it. But this story largely obscures the fact that Deamer had already spent more than a decade studying artificial cells and the ways that molecules move through cell membranes at UC Santa Cruz! He had been priming his mind to invent nanopore sequencers for many years.

The list goes on and on. (The micropipette, too, was invented in just three days by a frustrated postdoctoral student named Heinrich Schnitger, who got sick of using his mouth to move toxic liquids around. There was no “eureka” moment, really.)

But anyway, the reason I started writing this short essay was not to list off a bunch of inventions. My actual intention was to talk about optogenetics and how it came to pass, and how it serves as a powerful example of this “engineered serendipity” idea.

For context, optogenetics is a technology that uses light to control cells; often neurons. You first insert a gene encoding a channelrhodopsin into cells. The cells then make the protein, which naturally embeds itself into the cell membrane. Then, when you shine a light at the cell, some photons will strike this protein and force it open, allowing ions to rush inside and trigger an action potential. After optogenetics was invented in 2005, neuroscientists adapted it in many obvious ways; they found light-sensitive channels that could be used to silence neurons instead, and also engineered light-sensitive proteins that respond to different wavelengths. The end result is that now we have this toolbox whereby neuroscientists can “play the brain like a piano,” as Rafael Yuste, a neuroscientist at Columbia University, likes to say.

The invention of optogenetics was no accident, either. It was an act of “engineered serendipity.” Indeed, Francis Crick (who was ahead of his time on so many ideas) described ideas that seem similar to optogenetics, albeit without mentioning light, way back in 1979. Writing in Scientific American, Crick called for a technology which could “record from many neurons independently and simultaneously,” thus predating calcium sensors — a technique that can do exactly this — by a few decades. He also wrote about his desire to create “a method by which all neurons of just one type could be inactivated, leaving the others more or less unaltered,” which is also possible today using a method called holographic optogenetics (in which a laser beam is split and redirected to different neurons simultaneously.)

There is also evidence that optogenetics was invented twice, independently, by researchers who had no knowledge of each other. Zhuo-Hua Pan expressed channelrhodopsins in retinal ganglion cells, growing in a dish, in February 2004 and also in rats that summer. He submitted his paper to Nature in November 2004, a few months before Boyden & Deisseroth, but it was rejected because reviewers thought his technology was a way to restore vision, rather than a more broadly useful tool for neuroscience.

In any case, I’m struck by Boyden’s story of optogenetics because it so clearly conveys the act of discovery, as described by an engineer. The key breakthrough came about because the inventors wrote down the exact requirements they were looking for (specifically, a technology which could activate individual neurons with high spatiotemporal resolution) and then enumerated, or wrote down, all the ways they could possibly imagine to make this happen. Small molecules, magnets, electricity, light….

Here is how Boyden told this story in a speech to high school students last year:

“How did we make ourselves so lucky? … Simply put, try to think of every way of solving a problem… In this case, we made a list of all the forms of energy you can deliver to the brain – there’s light, sound, radio waves, a few other things. You can write the whole list down in a couple minutes. I liked light because it’s faster than anything else, and you can aim it precisely. Next question: how do you make brain cells sense light? Well, you can either design a tiny solar panel, or you can try to find one. That’s the whole list, just two cases. Finding one sounded easier. So we started emailing people, asking anyone who would listen – could you send us the light-driven molecule that you are studying, so we could put it into brain cells? And some people replied. We were in business! We took one molecule, put it in a brain cell, and as I told you, we could activate it with light. By writing down every way of solving a problem, in a systematic way, you can hone in on the best path. You may even find ideas you wouldn’t ordinarily think about. It helps you make a map of your own, when none is given to you.”

Boyden tells this story as if it was a “simple” thing; a process which could be taught and then executed again and again. I’d normally be skeptical of anybody trying to convince me that they can invent an incredibly useful technology on-demand, but I trust Boyden on this point because he has actually invented a few important technologies, including expansion microscopy and new methods for connectomics. Naturally, I wonder where else we could “engineer serendipity” in biology, and also how we might teach this, but don’t have satisfying answers. (If I did, I’d probably be a multi-millionaire, or at least have some patents by now!)

At the very least, it’s worth trying this approach in your own work. Start by precisely defining your needs, like “I want to turn neurons on- and off at the milliseconds timescale.” Then, write down a list of possibilities to do this. Weigh the pros and cons of each. Drugs are leaky and diffuse everywhere … electricity is too hard to control … light is cheap and abundant, and can be turned on- or off quickly, and we can shine it at a narrow point …

Then, start emailing people. Ask for their advice. Perhaps you’ll quickly figure out that there is an algae, or some other photosynthetic microbe, that has already evolved proteins to sense light! Maybe you could use one of those. And even if you test one of these ideas and it doesn’t pan out, at least you’ll have a starting point to engineer the tool and troubleshoot your strategy.

In biology, we often hear about how the “SEARCH SPACE IS INFINITE” and, in order to find a solution, we need to navigate through this combinatorial explosion of possibilities. The cell just has too many parameters! It’s a Black Box! Yada yada yada. But cearly there are ways to train your mind to precisely define what it is you’re trying to do, and what the specifications of a viable solution must look like, and then narrow down your search space — based on priors — to identify likely solutions that have already evolved in the infinite wisdom of Nature.

We ought to consider, therefore, not only how we can teach this process to students more systematically, but also which technologies we might build using this approach. Can we convene a workshop, or series of workshops, where we get lots of interesting people together in a room to discuss a technological need, and then we enumerate the options, weigh the pros and cons, and come up with a plan to find solutions? Let’s test it out.